July 16th 2025

So, if our eyes can’t catch them, what does? The answer lies in how AI sees and thinks differently than we do.

Imagine you’re inspecting a counterfeit banknote. You might look for obvious errors. But a machine inspects it for subtle anomalies, ink patterns, and micro-text that a human would never notice. That’s how AI approaches deepfake detection.

Instead of seeing a whole, recognisable face, deepfake detection AI processes content at a granular level, looking for microscopic inconsistencies and deviations from real-world physics and human biology.

Here’s a glimpse into what AI “sees”:

No human, no matter how vigilant, can spot these flaws, especially as deepfake technology continues to advance. This is precisely why AI is essential to fight AI.

Tools like VerifyLabs.AI leverage sophisticated algorithms and massive datasets to act as your digital detective, scanning for these invisible tells. We don’t rely on gut feelings; we rely on deep, data-driven analysis to tell you what’s real and what’s a dangerous fabrication.

Equip yourself with the power of AI to see what your eyes can’t.

July 16th 2025

It’s evening in a corporate office in a major world capital. The hustle and bustle has thinned as colleagues start to go home. An executive sits at their desk, wanting to tie up due diligence before leaving for the nightly commute.

The exec is examining a new client’s details and is uploading a scan of their passport.

It looks fine. The photo is nice and sharp. The layout is clear and all the markings are exactly where they should be.

Nothing about the passport made the exec want to check any further. And the proofs of address and other forms of ID also looked good.

But nevertheless they’re feeling uneasy.

Something the client said on their Zoom call was bothering them.

The client said the weather was sunny, but if they were in London where they alleged they were, they’d have known that it had been pouring with rain for the last two weeks.

In the meeting the exec explained it away thinking they were being ironic, or had made an attempt at humour. But the exec’s tummy feels inexplicably tight and off somehow and, despite being tired, they wonder what to do.

If this were you, would you:

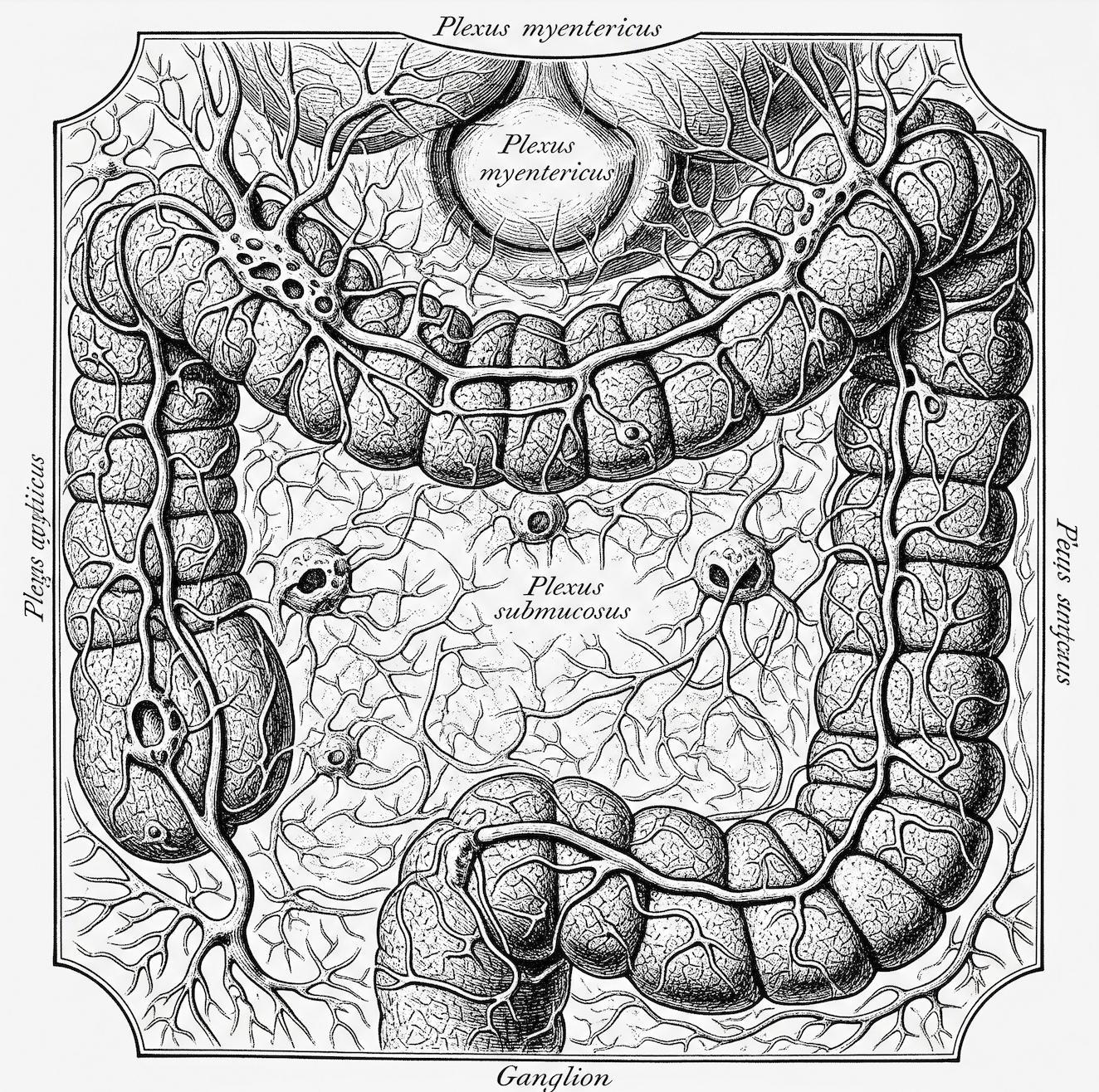

Our gut-brain connection is a powerful analytics system that often “knows” that further checks are needed before our conscious minds do. When faced with complex decisions where data is incomplete or overwhelming, your gut integrates a vast number of subconscious variables that your logical mind might overlook.

Your gut instinct is not a mystical feeling; it’s a biological and neurological event rooted in four key scientific principles:

Listening to your gut is listening to a powerful form of protective intelligence: a combination of real-time data from your “second brain” and high-speed analysis from your subconscious mind.

There are many accounts of deepfake attacks where victims override their initial bodily intuition, explaining it away.

Listen to your gut if:

Always Verify it first

July 16th 2025

We asked Gemini 2.5 Flash to use everything it knows (including the latest research and common limitations of current generative AI), to tell us how to spot deepfakes that are too good for the human eye to detect.

Gemini had a 3-second think about things that then said that the giveaways often lie in subtle, systemic inconsistencies in physiological and environmental details that betray a lack of genuine understanding of physics and human biology.

Here’s its findings:

The reason these are often the “last bastions” of detection for advanced deepfakes is that generating them requires not just replicating pixels, but accurately simulating complex real-world physics, biological processes, and nuanced human behaviour – something current generative AI still finds challenging. Dedicated AI detection tools are trained to spot these specific, often microscopic, anomalies that are invisible to the naked eye.

July 16th 2025

(1) What is the source of the content? Is it from a reputable, known source, or an unfamiliar website or social media account?

(2) Does the context in which the image is presented seem plausible or sensationalist? Are there any accompanying claims?

(3) Are there visible inconsistencies in lighting, shadows, or reflections within the image, particularly around the subject’s face or body compared to the background?

(4) Do the edges of the person or object in question look unnaturally sharp, blurry, or pixelated compared to the rest of the image?

(5) Are there any unusual distortions or artifacts in facial features, such as eyes, teeth, ears, or hair? Do they look symmetrical or natural?

(6) Does the skin texture look overly smooth, waxy, or patchy? Are there any inconsistencies in skin tone or blemishes?

(7) If it’s a known person, does their expression, pose, or the situation depicted align with their known behaviour or public persona?

(8) Are there any oddities in the background details? Do objects appear distorted, or are there any illogical elements present?

(9) Ask: have I seen this image elsewhere? Or can I find other sources corroborating or debunking it using a reverse image search (e.g., Google Images, TinEye)?

(10) Are there any subtle digital artifacts, such as unusual patterns in textures (e.g., hair, fabric), inconsistencies in focus or resolution between different parts of the image, or tell-tale signs of digital “stitching” or “inpainting” that suggest a generative AI process was used?

June 3rd 2025

Many of us have heard the phrase “due diligence”; it means to take reasonable steps to avoid illegalities or harm.

Since the 15th century when the concept was invented our world has changed dramatically. But advances in AI today mean that what could have passed as “due” back then now constitutes a dramatic “fail”.

The reason is that criminals are using AI to commit crimes that involve deepfaking or faking humans and human content. This calls into question the fundamental premise of identity, which lies behind any due diligence protocol. From false identities to deepfaked senior executives; from AI-infiltrated market assessments to fake audio instructions, the age of AI demands a robust response from individuals in their checks, balances and vigilance.

So, if your boss, your team or your CFO is waiting to see if AI threat will magically go away, you can gently direct them to the statistics.

Generative AI could enable $40 billion in US losses by 2027—Deloitte

UK law enforcement lacks the tools to effectively tackle AI-enabled crime—The Alan Turing Institute

8 million deepfakes will be shared in 2025, up from 500,000 in 2023—Gov.uk

The projected global cost of cybercrime by 2028 is $13.82 trillion—Sosafe

87% of global organisations faced an AI-powered cyberattack in the past year—The CFO

Deepfake AI market size will pass $3,889.8 million by 2032—Global Newswire

And in the meantime, get yourself a really good deepfake detector so you can double check that what seems to be, really is.

June 3rd 2025

For the most part, Gen X grew up in a pre-tech environment. No, the button on the back of Action Man that turned his eyes doesn’t count. Nor does the rocket-firing Boba Fett. Yes, okay, the Nintendo handheld c.1981 does—but only like the explosive taste of sherbert UFO sweets compares to an atom bomb.

In fact, Gemini Advanced Research estimates approximately 97.7% of the key technological domains defining an average individual’s experiences in 2025 were largely absent or existed only in fundamentally rudimentary and inaccessible forms during the formative years of Generation X (circa 1975-1995).

If we wanted to call home we had to queue to use a phone attached to a wall or go into a telephone booth. If we were invited into a meeting, it was in an actual room with humans and sometimes biscuits in it.

We inhabited the real world physically and intellectually, just as our human ancestors had.

As a result, our generation took identity for granted. When we spoke to someone on the phone, we didn’t question whether that person was really that person. Or a person at all.

Today, thanks to the criminals who use AI, presupposing humanity or human authorship puts people all over the world in danger.

We think that our new world should inform new assumptions:

People we see or meet online may be AI-generated deepfakes or AI-generated synthetics

People we see or meet online may not be human or fully human (real and deepfake splices)

Content we see or read online may not be made by a human

How do these assumptions change how you’ll interact online at work and at home?