July 18th 2025

Think of it like this: you wouldn’t send a human with a magnifying glass to find a tiny, undetectable virus, would you? You’d use a powerful, highly sensitive machine. Deepfakes are the digital viruses of our age, and your personal deepfake detector is the essential diagnostic tool.

Deepfake detection isn’t about guesswork; it’s about pure, unadulterated machine learning wizardry. We use AI models trained on millions of pieces of content, both real and fake. They learn to spot patterns so subtle, so minute, they’d make a needle in a haystack seem obvious.

It’s like having a digital forensic expert on your phone, constantly analysing:

These are the “fingerprints” AI leaves behind, even in the best fakes. Your eyes might see a perfectly plausible face, but our AI sees the mathematical anomalies that shout “fake”.

This isn’t technology reserved for government agencies or enormous corporations anymore. We’ve brought that very same, cutting-edge capability to your fingertips with VerifyLabs.AI.

Our app is ridiculously easy to use—just three taps and you’re done. It analyses images, video, and audio with up to 98% accuracy, giving you clear, colour-coded results:

If you’re keen on navigating the digital world safely then don’t rely on guesswork. Equip yourself with the power of AI to detect AI. It’s your definitive, easy-to-use solution for personal deepfake protection.

July 16th 2025

Remember the early deepfakes? Those grainy, often-jiggling videos with obvious lip-sync errors? Fast forward to 2025, and those “jiggle and glitch” days are long gone. Today’s deepfakes are sophisticated, convincing and the new weapon of choice for AI-driven criminals.

Gone are the days when deepfakes were just about fake celebrity videos. Now, they’re precise tools for calculated fraud and deception. Here are some of the emerging categories:

Financial fraud and business-email compromise (BEC)

Imagine a video call from your CFO instructing an urgent, high-value transfer—but it’s not them. Or a voice call from your CEO authorising a payment. We’ve seen chilling real-world cases, like a Hong Kong firm losing $25 million after a deepfake video call with their “CFO” and “colleagues.” These aren’t just one-off incidents; they are highly targeted, multi-modal attacks that combine deepfaked visuals and audio with social engineering.

Identity theft and account takeover

Biometric security, once our strong shield, is now a target. Deepfakes are being used to bypass facial recognition and voice authentication systems. Criminals use stolen data to create synthetic faces and voices, then “inject” them into verification processes, fooling systems designed to keep you safe.

Romance scams and extortion

Deepfake technology adds a terrifying new dimension to emotional manipulation. Scammers create realistic “digital twins” of victims or loved ones, exploiting personal connections for financial gain or even synthetic blackmail using fabricated intimate imagery.

Political misinformation and influencing operations

Deepfakes can create fake statements from public figures, manipulate election narratives, or spread propaganda, threatening democratic processes and public discourse at scale.

Remote job interview fraud

A new frontier of deepfake crime involves using synthetic video and audio to impersonate candidates in remote interviews, gaining access to sensitive company information or even employment under false pretenses.

The speed and accessibility of generative AI tools mean these sophisticated attacks are no longer reserved for highly skilled hackers. Off-the-shelf tools make it easier for anyone to create convincing fakes.

What does this mean for you?

In this rapidly evolving landscape, simple vigilance and common sense, while important, are often no match for an AI-powered adversary.

It’s time to equip yourself with the proactive defenses required for the digital age.

July 16th 2025

So, if our eyes can’t catch them, what does? The answer lies in how AI sees and thinks differently than we do.

Imagine you’re inspecting a counterfeit banknote. You might look for obvious errors. But a machine inspects it for subtle anomalies, ink patterns, and micro-text that a human would never notice. That’s how AI approaches deepfake detection.

Instead of seeing a whole, recognisable face, deepfake detection AI processes content at a granular level, looking for microscopic inconsistencies and deviations from real-world physics and human biology.

Here’s a glimpse into what AI “sees”:

No human, no matter how vigilant, can spot these flaws, especially as deepfake technology continues to advance. This is precisely why AI is essential to fight AI.

Tools like VerifyLabs.AI leverage sophisticated algorithms and massive datasets to act as your digital detective, scanning for these invisible tells. We don’t rely on gut feelings; we rely on deep, data-driven analysis to tell you what’s real and what’s a dangerous fabrication.

Equip yourself with the power of AI to see what your eyes can’t.

July 16th 2025

It’s evening in a corporate office in a major world capital. The hustle and bustle has thinned as colleagues start to go home. An executive sits at their desk, wanting to tie up due diligence before leaving for the nightly commute.

The exec is examining a new client’s details and is uploading a scan of their passport.

It looks fine. The photo is nice and sharp. The layout is clear and all the markings are exactly where they should be.

Nothing about the passport made the exec want to check any further. And the proofs of address and other forms of ID also looked good.

But nevertheless they’re feeling uneasy.

Something the client said on their Zoom call was bothering them.

The client said the weather was sunny, but if they were in London where they alleged they were, they’d have known that it had been pouring with rain for the last two weeks.

In the meeting the exec explained it away thinking they were being ironic, or had made an attempt at humour. But the exec’s tummy feels inexplicably tight and off somehow and, despite being tired, they wonder what to do.

If this were you, would you:

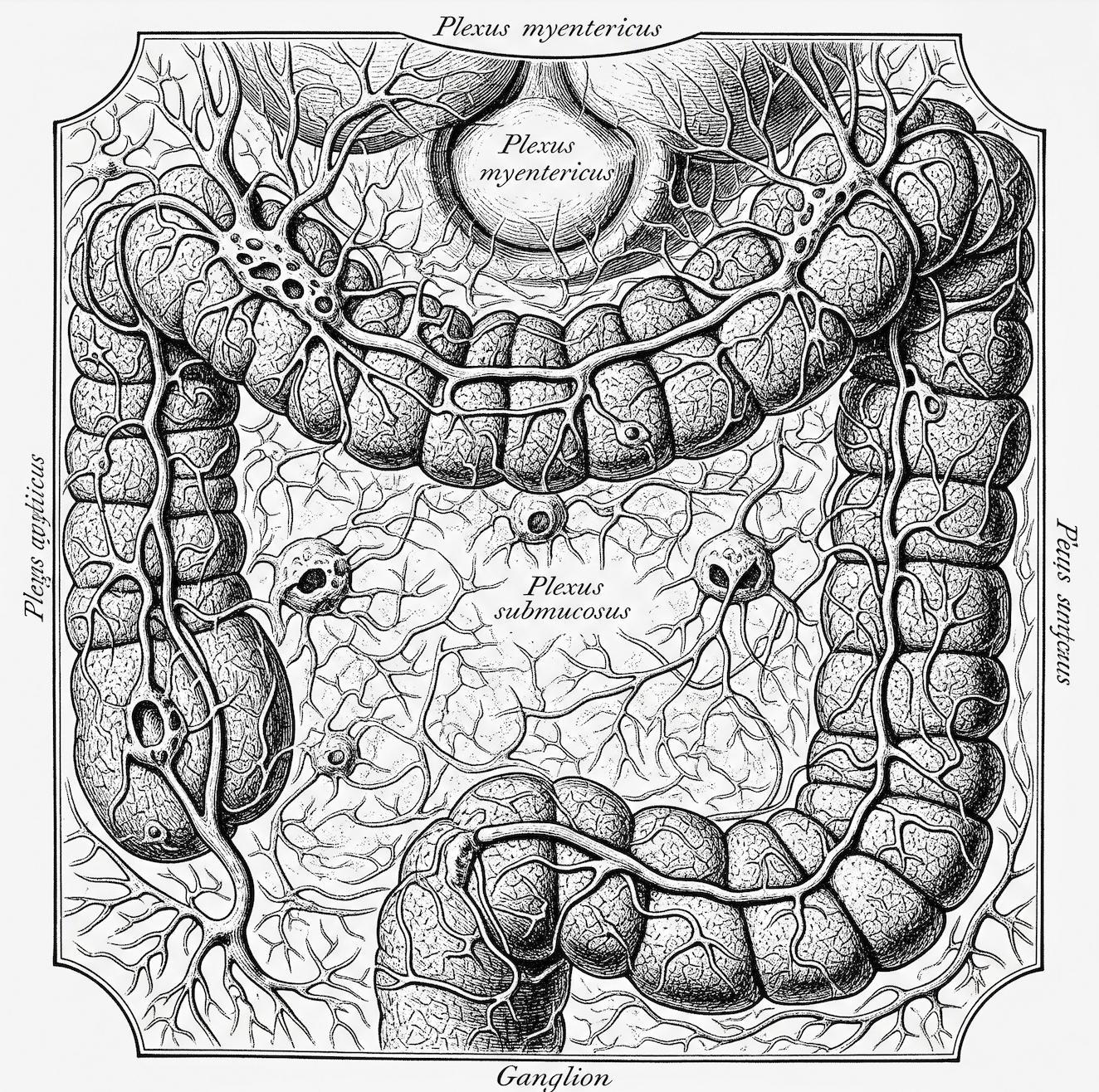

Our gut-brain connection is a powerful analytics system that often “knows” that further checks are needed before our conscious minds do. When faced with complex decisions where data is incomplete or overwhelming, your gut integrates a vast number of subconscious variables that your logical mind might overlook.

Your gut instinct is not a mystical feeling; it’s a biological and neurological event rooted in four key scientific principles:

Listening to your gut is listening to a powerful form of protective intelligence: a combination of real-time data from your “second brain” and high-speed analysis from your subconscious mind.

There are many accounts of deepfake attacks where victims override their initial bodily intuition, explaining it away.

Listen to your gut if:

Always Verify it first

July 16th 2025

We asked Gemini 2.5 Flash to use everything it knows (including the latest research and common limitations of current generative AI), to tell us how to spot deepfakes that are too good for the human eye to detect.

Gemini had a 3-second think about things that then said that the giveaways often lie in subtle, systemic inconsistencies in physiological and environmental details that betray a lack of genuine understanding of physics and human biology.

Here’s its findings:

The reason these are often the “last bastions” of detection for advanced deepfakes is that generating them requires not just replicating pixels, but accurately simulating complex real-world physics, biological processes, and nuanced human behaviour – something current generative AI still finds challenging. Dedicated AI detection tools are trained to spot these specific, often microscopic, anomalies that are invisible to the naked eye.

July 16th 2025

(1) What is the source of the content? Is it from a reputable, known source, or an unfamiliar website or social media account?

(2) Does the context in which the image is presented seem plausible or sensationalist? Are there any accompanying claims?

(3) Are there visible inconsistencies in lighting, shadows, or reflections within the image, particularly around the subject’s face or body compared to the background?

(4) Do the edges of the person or object in question look unnaturally sharp, blurry, or pixelated compared to the rest of the image?

(5) Are there any unusual distortions or artifacts in facial features, such as eyes, teeth, ears, or hair? Do they look symmetrical or natural?

(6) Does the skin texture look overly smooth, waxy, or patchy? Are there any inconsistencies in skin tone or blemishes?

(7) If it’s a known person, does their expression, pose, or the situation depicted align with their known behaviour or public persona?

(8) Are there any oddities in the background details? Do objects appear distorted, or are there any illogical elements present?

(9) Ask: have I seen this image elsewhere? Or can I find other sources corroborating or debunking it using a reverse image search (e.g., Google Images, TinEye)?

(10) Are there any subtle digital artifacts, such as unusual patterns in textures (e.g., hair, fabric), inconsistencies in focus or resolution between different parts of the image, or tell-tale signs of digital “stitching” or “inpainting” that suggest a generative AI process was used?